How We Built repowise: Architecture of a Codebase Intelligence Platform

Most software engineers spend upwards of 70% of their time reading code rather than writing it. Yet, the tools we use to navigate these complex systems have remained remarkably stagnant. While IDEs have improved, the high-level understanding of a system—its architectural boundaries, its risk centers, and its institutional memory—remains trapped in the heads of senior maintainers or buried in stale Wiki pages.

When we set out to build a code documentation tool that actually lived up to the needs of modern engineering teams, we realized that documentation wasn't the problem; intelligence was. We needed a system that didn't just mirror the code, but understood its history, its dependencies, and its intent. This led us to build repowise, an open-source codebase intelligence platform designed to bridge the gap between raw source code and actionable architectural insight.

In this post, we’ll dive deep into the codebase intelligence architecture of repowise, exploring how we ingest millions of lines of code, mine git history for social signals, and expose it all to AI agents via the Model Context Protocol (MCP).

Why We Built repowise

The motivation for repowise came from a recurring frustration: the "Documentation Decay." In any fast-moving repository, documentation is a snapshot of the past. To solve this, we needed a tool that was:

- Automated: Documentation should be a byproduct of the build or CI process.

- Multidimensional: It must combine static analysis (ASTs), social analysis (Git), and semantic analysis (LLMs).

- Agent-Native: It shouldn't just be for humans; it must be the "brain" for AI coding assistants.

The Gap in the Market

Existing tools generally fall into two camps: static documentation generators (like Sphinx or Doxygen) that lack context, and "AI-chat-with-your-repo" tools that often hallucinate because they lack a structured understanding of the codebase's graph. We wanted to build a middle ground—a structured, queryable intelligence layer.

Our Design Principles

- Local-First & Self-Hostable: Code is a company's most valuable IP. We chose an open source architecture under the AGPL-3.0 license to ensure data privacy.

- Graph-Centric: Everything in a codebase is a relationship. Files import modules; authors own files; commits change symbols.

- LLM Agnostic: Whether you use Claude, GPT-4, or a local Llama instance via Ollama, the architecture must remain consistent.

High-Level Architecture

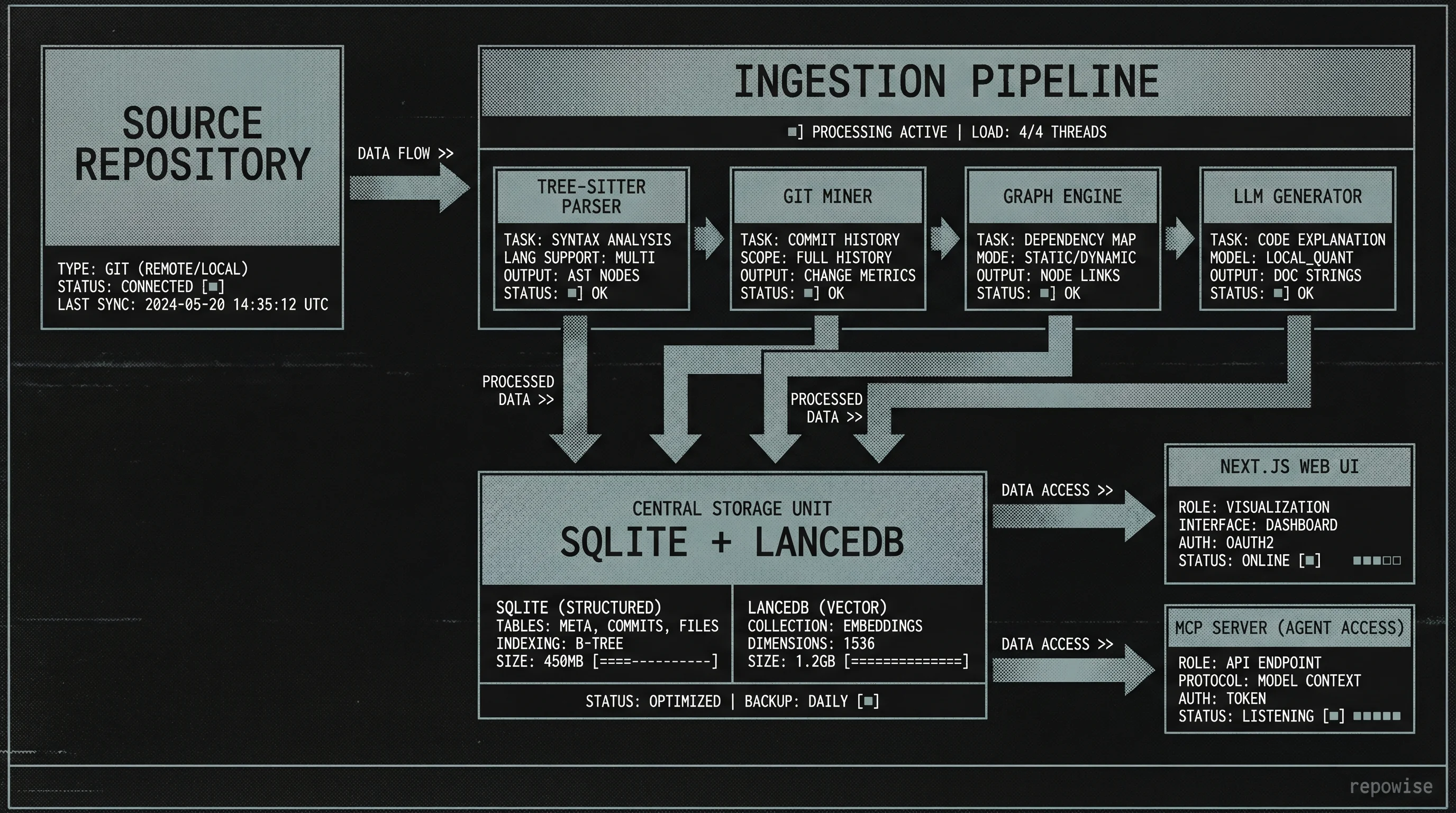

Repowise is built as a python monorepo architecture, chosen for its rich ecosystem in data science, AST parsing, and AI integration. The system is divided into three primary layers:

- The Ingestion Pipeline (

repowise-core): The heavy lifter that parses code, mines git, and populates the database. - The CLI (

repowise-cli): The entry point for developers to trigger indexing and manage local instances. - The Server (

repowise-server): A FastAPI-based backend that serves the Web UI and the MCP tools.

repowise System Architecture

repowise System Architecture

The Ingestion Pipeline: Building the Brain

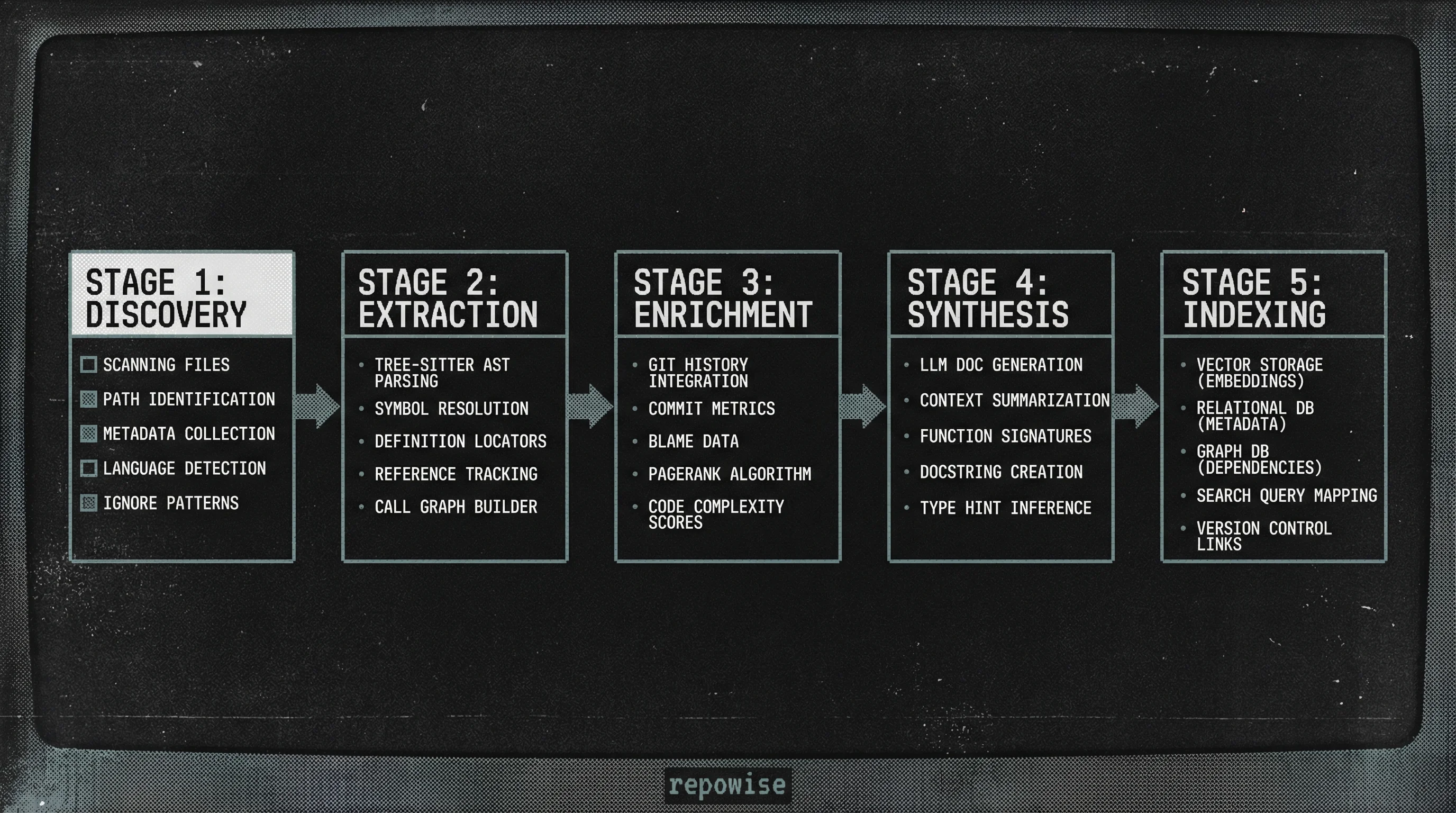

The ingestion pipeline is the most complex part of the codebase intelligence architecture. It transforms unstructured text into a structured knowledge graph through five distinct stages.

1. Parser Layer (10+ Languages)

We use Tree-sitter for parsing. Unlike regex-based parsers, Tree-sitter builds a concrete syntax tree (CST) that allows us to identify symbols (functions, classes, variables) with high precision. By supporting 10+ languages—including Python, TypeScript, Go, and Rust—we can build a cross-language map of a polyglot microservice architecture.

2. Git Indexer

Code is a living organism. To understand it, you must understand its evolution. Our Git indexer mines the full history to calculate:

- Ownership Maps: Who has the most "knowledge" of a specific module based on lines changed and commit frequency.

- Hotspot Analysis: Identifying files with high churn and high complexity—the primary breeding grounds for bugs.

- Co-change Patterns: "When developers change File A, they almost always change File B." This reveals hidden logical dependencies that static analysis misses.

3. Graph Builder

Once we have the symbols and the history, we build a directed dependency graph. We treat files and symbols as nodes and imports/calls as edges. We then run PageRank on this graph to identify "Critical Infrastructure"—files that, if changed, have the highest downstream impact. You can see this in action in our FastAPI dependency graph demo.

4. LLM Generator

This is where the "intelligence" becomes human-readable. We feed the extracted symbols, their dependencies, and their git history into an LLM to generate:

- Module Overviews: What is the purpose of this directory?

- Symbol Documentation: What does this specific function do?

- Freshness Scores: A metric indicating how much the code has diverged from its documentation.

5. Vector Indexer

Finally, we generate embeddings for every chunk of documentation and code, storing them in LanceDB. This enables semantic search—allowing a developer to ask "Where is the authentication logic?" and get results even if the word "authentication" isn't in the filename.

Data Model and Storage: Why SQLite?

A common question we get is: "Why not use PostgreSQL or Neo4j?"

Our decision to use SQLite for relational data and LanceDB (which is built on top of the local filesystem) was driven by the requirement for a seamless self-hosted experience.

| Feature | SQLite / LanceDB | PostgreSQL / pgvector |

|---|---|---|

| Setup Overhead | Zero (File-based) | High (Docker/Managed) |

| Portability | Single file (.db) | Requires Export/Import |

| Performance | Excellent for local read-heavy | Better for high-concurrency write |

| Agent Friendly | Can be bundled with the repo | Requires network access |

By using SQLite, a user can run repowise index and have a fully functional intelligence engine living in their ~/.repowise folder without managing a database cluster. For those interested in the specifics, you can learn about repowise's architecture on our dedicated docs page.

The Ingestion Pipeline Flow

The Ingestion Pipeline Flow

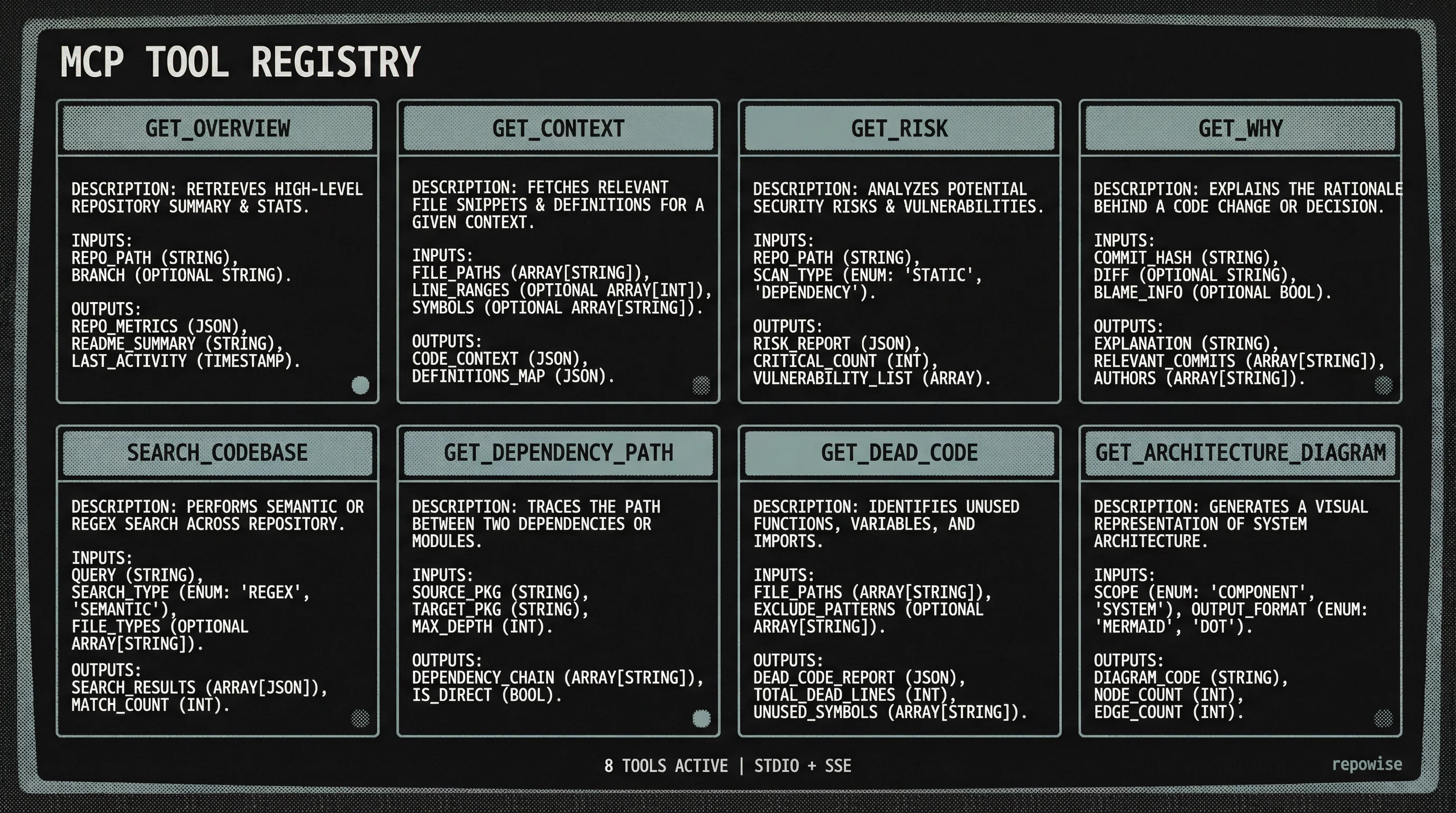

The MCP Server: Intelligence for Agents

The most transformative part of the repowise architecture is the Model Context Protocol (MCP) server. MCP is an open standard that allows AI agents (like Claude Code, Cursor, or Cline) to safely access local tools and data.

Instead of shoving 100,000 lines of code into an LLM's context window (which is expensive and noisy), repowise provides 8 structured tools that the agent can call as needed.

Tool Design Philosophy

We designed our tools to follow the "Information Hierarchy":

get_overview(): Start high-level.get_context(): Zoom into specific files.get_risk(): Check if the proposed change is dangerous.get_why(): Understand the historical decisions behind the code.

This approach allows an AI agent to navigate a codebase much like a senior engineer would: by building a mental model before diving into the lines of code. You can see all 8 MCP tools in action to understand how they provide context to agents.

Technical Decisions We Debated

Why Python Over Go?

While Go is fantastic for CLI tools due to its static binaries, Python won out for repowise because of its dominance in the LLM ecosystem. Libraries like LangChain, LlamaIndex, and various embedding wrappers are first-class citizens in Python. Furthermore, Tree-sitter's Python bindings are mature and highly performant for our use case.

Why AGPL-3.0?

We believe codebase intelligence should be an open standard. By using AGPL-3.0, we ensure that the core engine remains open-source, even if offered as a service. This protects the community's contributions and ensures that "intelligence" doesn't become a proprietary black box.

Why 4 LLM Providers?

We provide native support for OpenAI, Anthropic, Google Gemini, and Ollama. We didn't want users to be locked into a specific provider's pricing or privacy policy. If you have a sensitive codebase, you can point repowise to a local Ollama instance and keep every byte of data on your machine.

MCP Tool Registry

MCP Tool Registry

The Web UI: Visualizing Complexity

The repowise Web UI is built with Next.js and React Server Components. We chose this stack to allow for fast, server-side data fetching from the local SQLite database.

One of the highlights of the UI is the Hotspot Analysis. By plotting code complexity against change frequency, we create a quadrant map that tells developers exactly where the "technical debt" is concentrated. You can explore the hotspot analysis demo to see how we visualize these risks.

The UI also includes:

- Interactive Dependency Graphs: Powered by Cytoscape.js or Mermaid.

- Ownership Maps: Visualizing the ownership map for Starlette helps teams understand who to tag in PRs.

- Real-time Freshness Tracking: Seeing which docs are "stale" based on recent commits.

What We'd Do Differently

Looking back at the initial build, there are a few things we would change. Early on, we attempted to use a pure vector-search approach for everything. We quickly learned that vectors are bad at structural questions. If you ask "What are the dependents of this class?", a vector search might return "similar" classes, but it won't give you the actual import list. This realization is what led us to the hybrid Graph + Vector approach we use today.

We also underestimated the complexity of "Dead Code Detection" in dynamic languages like Python. We're currently refining our get_dead_code() tool to better handle the nuances of dynamic imports and reflection.

Key Takeaways

Building a codebase intelligence platform is as much about data engineering as it is about AI. To build something like repowise, you need to:

- Treat code as data: Use ASTs and Git history to build a structured foundation.

- Prioritize the Graph: Relationships between files are more important than the content of the files themselves for high-level understanding.

- Design for Agents: The future of coding is collaborative between humans and AI. Your architecture must reflect that by providing structured, low-noise tools via protocols like MCP.

- Stay Local: For developer tools, privacy and speed are paramount. SQLite and local vector stores are your best friends.

If you're interested in seeing the results of this architecture, you can browse live examples of repowise running on popular open-source projects.

FAQ

Q: How does repowise handle very large repositories? A: We use incremental indexing. After the first scan, we only re-parse files that have changed in the git log, significantly reducing LLM costs and processing time.

Q: Can I use it with my private enterprise code? A: Yes. Because repowise is self-hostable and supports local LLMs via Ollama, your code never has to leave your infrastructure.

Q: Does it support monorepos? A: Absolutely. Our python monorepo architecture was designed specifically to handle multi-package structures and cross-package dependencies.