Codebase RAG buys you less than git history and ownership

A bug report that says “why did this endpoint start failing after last week’s refactor?” is not really a search problem. It is a change-tracing problem, and the codebase RAG landscape tends to blur that distinction until you watch an agent read the same three files twice and still miss the reason the code changed. The useful question is usually: what changed, who touched it, what depends on it, and who should own the fix?

Most teams feel this before they can name it. Retrieval helps find text. It does not, by itself, explain intent.

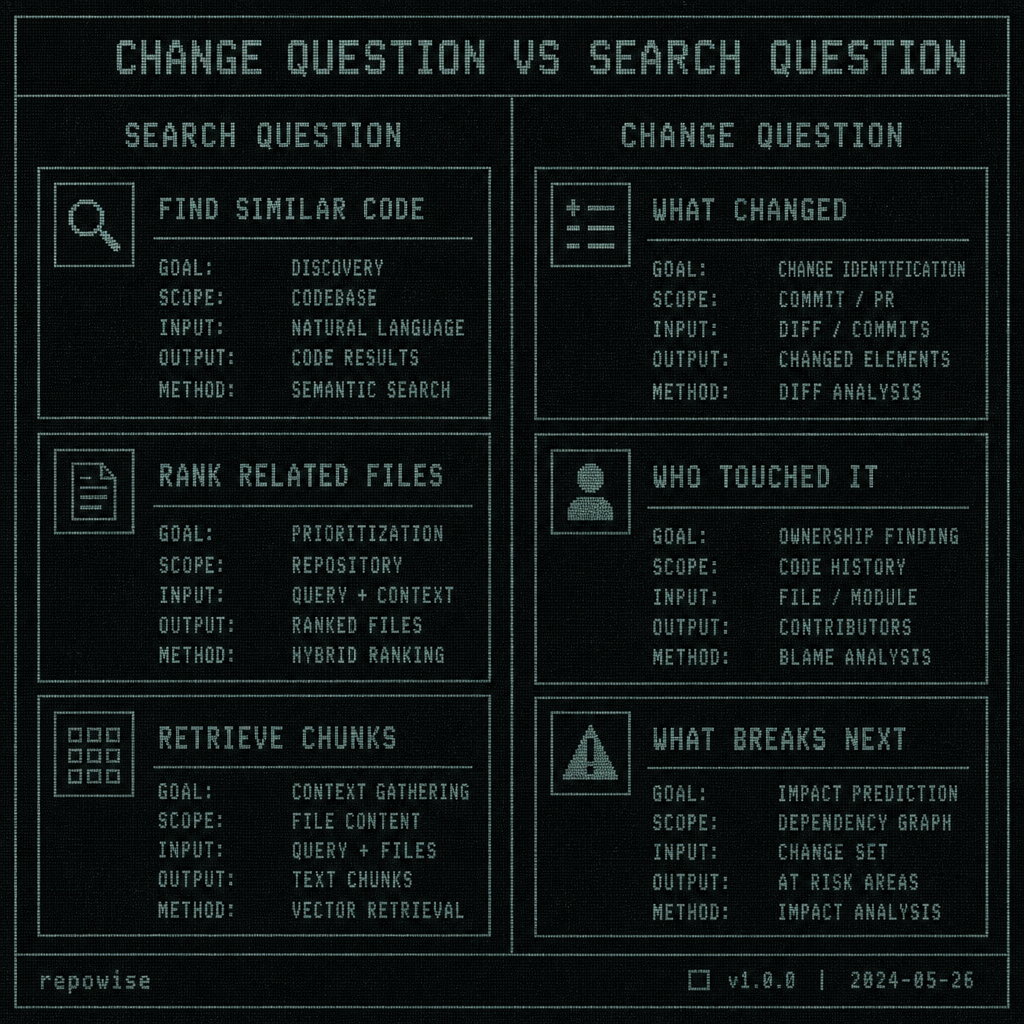

A code question is usually a change question, not a retrieval question

If you ask an agent, “Where is the auth header parsed?” semantic retrieval can probably get you close. If you ask, “Why did this endpoint start failing after last week’s refactor?” you need more than nearby chunks. You need the causal chain: the commit that moved the code, the file that now owns the behavior, the dependency that changed shape, and the person who is most likely to know whether the regression was deliberate.

That is why codebase RAG is best treated as a search layer, not the whole answer. It can point at relevant files. It cannot infer whether a new helper replaced a brittle branch, whether a test gap matters, or whether the fix belongs in the caller or the callee. In practice, the first pass is often: find the changed area, then ask history and ownership to explain it.

This is where the codebase RAG landscape gets over-sold. Vendor demos love “find related files.” Engineers need “tell me what changed, why it changed, and who should care.” Those are different tasks.

CHANGE QUESTION VS SEARCH QUESTION

CHANGE QUESTION VS SEARCH QUESTION

A practical rule: if the question starts with “why,” “who,” or “what else depends on this,” you are already outside pure retrieval. The system needs git history, ownership, and dependency context to answer well.

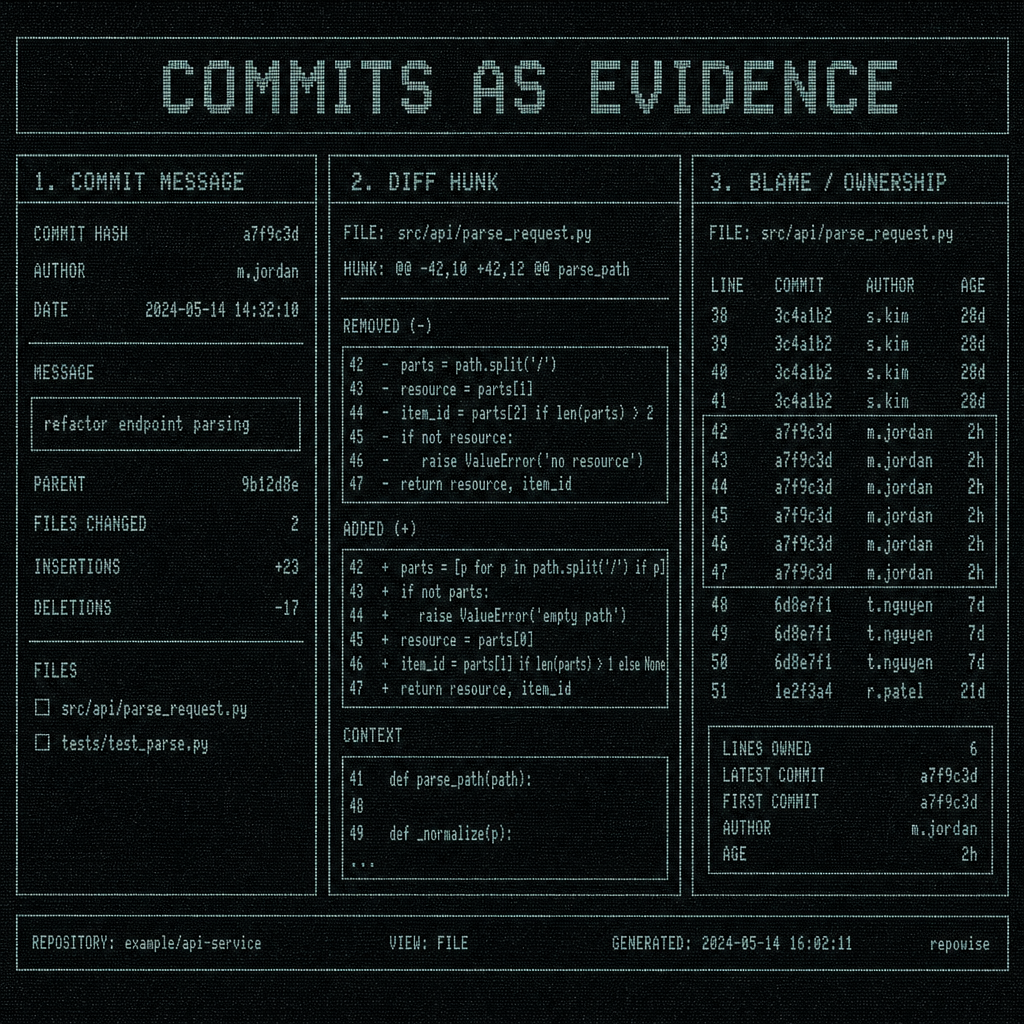

Git history gives you cheaper truth than embeddings for most repo work

For recent code behavior, git history is usually the lower-cost source of truth. It is ordered. It is attributable. It already encodes real edits instead of semantic similarity. Commit messages, diff hunks, and blame/ownership together answer a different class of question than vector similarity does.

Vector search can tell you that two files talk about “timeout” and “retry.” git history can tell you that the timeout was shortened in commit a1b2c3 because an upstream service started returning partial responses, and that the same change touched three tests and one shared helper. That is not a subtle difference. It is the difference between “related text” and “actual explanation.”

This is also why history depth should be configurable, not assumed fixed. A small team working on a fast-moving service may care most about the last 50 commits. A platform team debugging a quarterly regression may need a much deeper slice of the log. Fixed assumptions age badly here.

Public git docs describe git blame as a way to see the last revision that modified each line, and that is still the right mental model: history is a change-tracing mechanism first, a search index second. Sourcegraph’s code intelligence docs make a similar distinction between code search and code intelligence; the useful system is not the one that merely matches strings, but the one that ties symbols, references, and change history together. DeepWiki-style products sit even more squarely on retrieval, which is fine until the question becomes causal.

What surprised me, and what surprised a few teams I’ve watched, is how often the commit message is enough to avoid another round of file reading. Not every commit is good, obviously. Some are useless. But even mediocre history beats a perfect embedding when you need a timeline.

git history signals

COMMITS AS EVIDENCE

COMMITS AS EVIDENCE

Ownership beats semantic similarity when the team is asking who should fix this

A senior engineer’s real question is often not “where is the code?” It is “who should fix this, and who should review it?” That is ownership, not retrieval.

Ownership data changes the routing path immediately. If a TypeScript service starts returning 500s after a refactor, you do not want the agent to wander through every file that mentions the endpoint name. You want it to identify the owner of the module, the people who touched adjacent code last week, and the reviewers who have seen this area before. That is much closer to bus factor and co-change analysis than to semantic search.

A good ownership model also reduces social friction. It helps route a bug to the right reviewer without forcing someone to reverse-engineer the org chart from GitHub activity. It tells you where the blast radius is likely to be, which files tend to move together, and which area is one person away from being fragile. That is the kind of signal that makes an agent useful in a 50-person team.

Here is the practical version: semantic similarity finds the “aboutness” of code. ownership tells you who is accountable for it. Those are not interchangeable.

Worked example: the same bug triage flow with RAG-only versus history-plus-ownership

Suppose a Python service starts failing after a refactor to request parsing. The alert says one endpoint is now timing out. The question is: why did this endpoint break, and who should fix it?

| Step | RAG-only | History + ownership |

|---|---|---|

| 1. Initial search | Retrieves a few files mentioning the endpoint and “timeout” | Identifies the refactor commit, the changed function, and the owning module |

| 2. Reading | Agent opens several related files to find the relevant branch | Agent reads the diff hunk and the nearby tests first |

| 3. Reasoning | Infers a possible connection from similar text | Sees the exact code path that changed and the commit message that explains intent |

| 4. Routing | Guesses a reviewer from file proximity or recent mentions | Routes to the module owner and the last co-change partner |

| 5. Outcome | More file reads, more tool calls, slower confidence | Fewer reads, fewer tool calls, faster identification of the right owner |

The key difference is not just speed. It is that the second path can explain itself. A diff hunk plus ownership plus a recent change log usually gets you to “this refactor removed the fallback that masked partial upstream responses” faster than a pile of embeddings ever will.

This is where retrieval becomes useful as one layer inside a broader codebase intelligence stack. It helps you find the relevant docs or symbols. But the agent still needs the causal context to stop being a fancy grep.

When codebase RAG is worth paying for

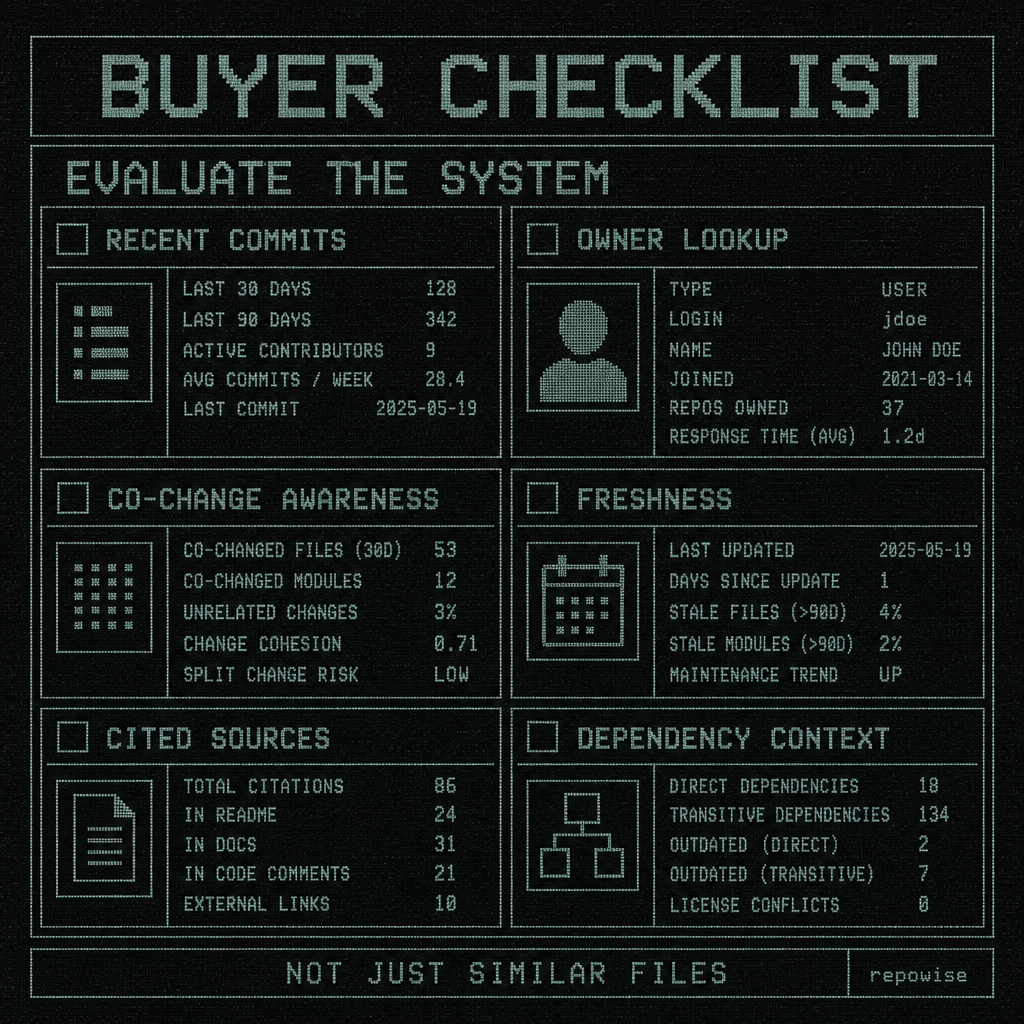

Pay for retrieval when it does more than return similar chunks. The buying line is whether the system can connect text to change, and change to accountability.

A useful checklist looks like this:

- Explain recent changes, not just retrieve related files

- Cite commits or other source evidence

- Surface owners or likely reviewers

- Show freshness of indexed context

- Connect decisions to code paths

- Expose dependency context across files

- Handle recent-commit questions well, not only static docs

That last item matters more than most demos admit. Static documentation is the easy case. The hard case is the code that changed yesterday and the docs that have not caught up yet.

Vendor demos that only show “find related files” are underspecified. That is not a knock on retrieval as a technique. It is a warning that retrieval alone is a thin slice of the problem. Sourcegraph, DeepWiki-like tools, and products such as Repowise all sit somewhere on this spectrum, but the decision lens should stay on explainability, history, and ownership rather than on embeddings as an abstract feature.

The product mention is incidental; the evaluation question is the point.

BUYER CHECKLIST

BUYER CHECKLIST

What to evaluate before you sign a contract

If you are buying a codebase RAG product for a 50-person team, ask for evidence on the stuff that actually shortens debugging time.

- Recent-commit explainability on real bugs, not curated docs

- Ownership coverage for the hottest modules

- Co-change awareness across the last meaningful history window

- Dependency context across callers, callees, and shared helpers

- Freshness of indexed context after new commits

- Proof that the system helps agents read less, not just search more

- Multi-repo or federated query support if your team works across repositories

That last one matters quickly once your org has a backend repo, a frontend repo, and a shared API contract. A tool that can only answer within one repo is fine until the bug crosses boundaries, which is usually when the expensive incidents happen.

If the vendor cannot show a recent regression from commit to owner to fix path, keep walking. If they can only show a semantic search demo, you are buying a better grep, not codebase intelligence.

For teams that want a concrete implementation reference, tools like Repowise, Sourcegraph, and DeepWiki implement this differently. The useful comparison is not “who has embeddings,” but “who can explain why this code changed and who should act on it.”

FAQ

Is codebase RAG enough for understanding a large repository?

Usually not. It is helpful for finding relevant code and docs, but large repos are mostly about change history, ownership, and dependency context. Without those, retrieval can point you at the right neighborhood and still leave you guessing.

Why does git history matter more than embeddings for code search?

Because git history tells you what actually changed, in order, with attribution. Embeddings can find semantically similar text, but they do not explain intent, causality, or who touched the code last.

How does ownership help agents and engineers find the right file faster?

Ownership turns a vague search into a routing problem. Instead of scanning every related file, the agent can start with the module owner, the last co-change partner, or the reviewer who already knows that area.

What should I evaluate in a codebase RAG vendor before buying?

Ask for recent-commit explainability, owner lookup, co-change awareness, freshness of indexed context, and evidence that it reduces file reads or tool calls on real incidents. If your team spans multiple repos, also ask about federated queries and cross-repo dependency awareness.